Nigel Henderson

Photograph of Freda Paolozzi ([c.1950s])

Tate Archive

How, why, what and who

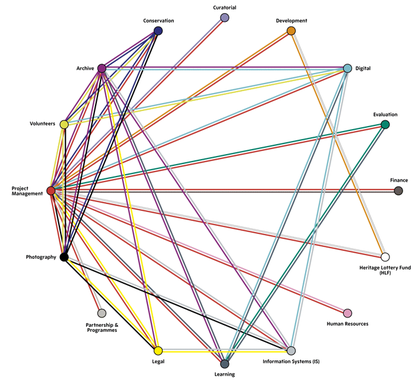

Digitisation processes, or 'workflows' – the steps taken in order to get collections digitised and published – are invariably complex. Moreover, workflows will differ from one institution to another, as they will be designed and implemented to suit specific requirements – in response to such things as databases used, personnel available, scope of work, among other contingencies.

If digitisation is viewed as a long-term commitment, then it follows that workflows benefit from being designed with longevity in mind. To best serve audiences, and to support the parent institution, digitisation workflows – just like the programmes of work they are designed to deliver – should be researched, resourced, robust and resilient.

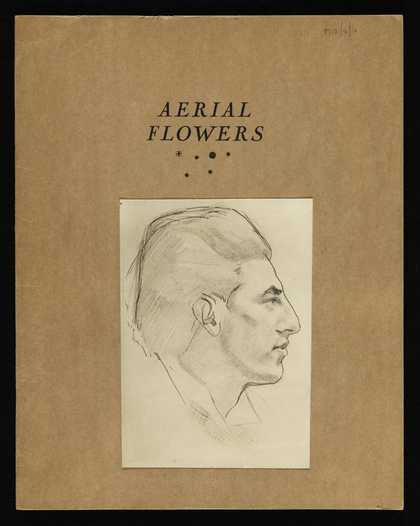

Paul Nash, recipient: Eileen Agar

‘Aerial Flowers’, by Paul Nash (1947)

Tate Archive

Planning ahead

In our case, we scoped the succession plan and legacy outcomes for Archives & Access prior to bid submission. Put simply, the long–term goal was to create a robust and resilient workflow that would support archive digitisation activities beyond the run of the project, with costs attributed accordingly. As a result Archives & Access secured a grant that allowed funds to be invested in both workflow infrastructure, the hardware and software, and staff training and development.

Accessible, or accessed?

Further, the bid detailed a project that undertook digitisation and publication delivered in conjunction with learning and outreach activities. This approach was taken in order to ensure the published collections reached widened audiences: that the collections were not simply accessible, but accessed (read more about the project's outreach activities).

We already knew from working with local school and community groups that we had a rich collection with lots of potential. However, pre-project much of our evidence was anecdotal. To understand audience needs better, we commissioned an external agency to undertake a series of focus groups with our target audiences. We also drew on external evaluation and research from across the sector, including research commissioned by the HLF, to situate our project in the wider context of online engagement and participation.

Gathering this evidence both helped us determine the project scope and detail what we needed – in terms of technical infrastructure and staff know-how – to deliver the vision and ensure the project had a productive legacy.

Outcomes

By investing in hardware and software purchases, which included setting up the High Value Digital Asset (HVDA) storage (which has been further developed through other projects for the digital preservation of artworks), and a digitisation imaging suite in photography, Archives & Access increased our capacity to digitise in the long-term.

By investing in staff training, we were endowed with a skilled and inter-connected workforce able to continue archive digitisation activity once the Archives & Access project activity had concluded.

The delivery of digitisation in conjunction with outreach informed the selection criteria, and helped shape the production of a range of digital tools developed to encourage discovery of the published collections.

This meant that the project would become a programme upon completion, and that the workflow would be embedded as business as usual.

Further, through encouraging conversation and practice sharing, the project helped us to engage more deeply with sector-wide digitisation activity. We have found it particularly useful to discuss, troubleshoot, and reflect with colleagues undertaking a wide range of digitisation schemes. No two archive digitisation projects will be exactly alike, but by sharing knowledge, experience, and visions, we can aim as a sector, to develop our resilience, capacity and expertise as we engage in this inherently collaborative field.

Recommendations

Below are a series of recommendations derived from our experience with the Archives & Access digitisation and outreach project. You will find a range of guidance, from advice on designing a suitable digitisation workflow, to information about how we selected material for digitisation, as well as pointers on image capture, legal issues to be aware of, and the approach we took to subject indexing the collections published.

Archive cataloguing

The Archives & Access project adopted and adapted extant back-end cataloguing and IT systems to enable archive digitisation to happen within existing channels at Tate. This approach was taken to produce a sustainable outcome: rather than designing workflows to suit one–off digitisation projects, the workflow developed for Archives & Access would serve our future digitisation activities.

This required Tate Archive images and metadata to be integrated into the existing technical architecture for publishing the art collection online, and for the records to be published via the front-end interface. Though this approach presented a number of technical challenges, the integration of artworks and archive materials ensured that the archive pieces would be more readily surfaced in search results and would benefit from future functionality and design updates rolled out across the website.

challenges and solutions

Archive data is catalogued in a different way from that relating to artworks in our collection: artworks are catalogued as single artefacts, whereas archive materials are catalogued hierarchically (where similar types of items are grouped together in series, and cataloguing information is placed at the most appropriate level of the catalogue).

We decided to draw the archive cataloguing information from CALM – its native database – into TMS, where the artworks are catalogued. In doing this, we had all the information about the digitised pieces located within one database. However, this process required us to map fields in CALM to match those in TMS, to make sure that the same information was in the correct place in both systems. In order to do this, a script was written to pull the information from one database into the other. This meant that all the archive material for digitisation was manually catalogued so that every single piece had an individual reference number. This was a time-consuming process, but it enabled the data to be transferred from CALM to TMS and then on to iBase Manager. This meant that all the cataloguing records were in the image management system – including handling instructions from the conservators and any requests to redact information – enabling exact matching of the images to the catalogue.

Recommendations from the archive team

- If following a comparable method to the above, do not underestimate the need to check original cataloguing (as the project progressed, we discovered that there were more legacy cataloguing issues than we had first imagined)

- Think carefully about what exactly you want to do as you design your approach: do you need to capture everything, or would excluding the more complex or most fragile items create a much simpler workflow?

- Make sure there is enough contingency, in both budget and time

- Do not underestimate the impact on time staff changes will have; do not assume everyone will stay for the duration of their contracts

- Acknowledge that errors will be made, and make sure the workflow is designed to accommodate this

- Think about the project team in term of a forum for communication and collaboration: a chance to learn about your colleagues working practices

- Do not underestimate how much of an impact a large digitisation project will have on the day-to-day running of the archive - if digitisation is a priority, make sure that this is acknowledged and understood

- Make sure everyone has access to all the databases and someone knows how to use every system in the workflow

Archives & Access endeavoured to tell the story of British art and its social context to widened audiences.

To do this, our archivists, exhibition curators, and colleagues from the Learning department selected archive materials that would be of public interest, as well as items from the archives of less well known artists and historically under-represented groups. Further, since the Archives & Access Learning Outreach programme had a UK-wide reach, at least three artists’ archives were selected for each region and nation of the country: Scotland, North West (including Cumbria and Lancashire), North East (including Yorkshire and Northumbria), Northern Ireland, Midlands, East, Wales, London and Home Counties, South West, and South East.

The selection process took place over the course of several months, and the following questions were asked of every candidate for digitisation:

- Does this item have a UK-wide reach?

- Does this item show an artist's connection and relationship with an area of the UK?

- Is the creator of the item a less well-known artist and / or historically under-represented?

- Is the artist creator: emigre; female; LGBTQ+; BAME?

- Does this item contribute to providing insight into a broad range of artists' practices?

- Does this item provide background or contextual information about artists' practices or processes?

- Is this a challenging item – 3D pieces, fragile items, or materials with complicated copyright issues? A range of such items were chosen specifically in order to test the tolerances of the digitisation workflow.

The answers were tabulated in an Excel spreadsheet, which provided us with a comprehensive document detailing how well each piece would support the project remit.

The unintended consequences of digitisation

As well as the question of what to digitise, we should also be continuing to ask what the consequences of digitisation will be.

Those collection made digitally available are, by intention, more accessible - and more likely to enter wider circulation. By making some collections available over others, do we risk (inadvertently) creating cultural biases? This important issue should be considered and discussed whenever digitisation is embarked upon.

Selection is a significant issue, and one that will require tailored approaches from institutions that take into account the nature of their collections. Both institutions and publics alike will benefit from strategic selection choices.

Before the archive material is photographed, it should be assessed by a conservator/s and treated where necessary to ensure the material is not at risk when being handled in the image capture studio.

Assessment Process

Conservators firstly surveyed the materials which meant examining the archive items and writing down anything that needed to be undertaken to make the objects safe for digitisation, or to uncover images or information that needed to be captured

The next step is treatment.

Project conservators took the view that conservation for digitisation is not necessarily about fixing everything – it is more about making strategic decisions. Conservators will need to decide whether they fix every small tear and unfold every folded corner – if all fixes are made, more time will need to be allocated to this process.

Our conservators took the following into consideration during the conservation for digitisation process:

- Is the visibility of the image affected?

- Are there folds or creases that cover or change the image?

- Are there tears through the image?

- Were these there when the artist created the object?

- Will the object be damaged by the digitisation process?

- Will this tear extend when the object is handled?

- How far can this book be opened safely without putting strain on the binding?

- Is this damage or is it part of the object or its history?

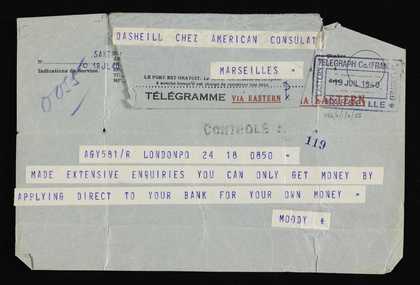

The key points of guidance to take into consideration when digitising archive material, as outlined by our Intellectual Property Manager, are:

- Does the material contain any personal data (i.e. references to living persons)? If so, data protection rules apply

- Might the material be considered obscene, defamatory or illegal? If so, please alert your project's legal advisor / team immediately

- When cataloguing and digitising large collections, please alert the legal team immediately if there are questions over the provenance of ownership of individual or of loose items within that archive

- If images of children are depicted (including of children who are now grown-up), you should alert the legal team

Copyright is a member of the family of ‘Intellectual Property Rights’ (IPRs). Copyright is the exclusive legal right by the copyright holder to control the copying of certain kinds of work for a set period of time. The right arises automatically in the UK, and is granted to the original creator of a literary, dramatic, musical or artistic work, enabling them to control the way in which their works are used or reproduced irrespective of who owns the physical work itself.

Copyright is a property right, and just like any other property right it can be sold, assigned or bequeathed from one person to another for the duration that copyright subsists. Because of this the copyright holder may not be the original creator of the work. Identifying and locating the copyright holder(s) is one of the challenges of licensing copyright works, particularly as time progresses.

The key points to consider in relation to copyright when digitising archive material are:

- Is the material in copyright (i.e. by someone still alive or who died in the last 70 years)? If so, your copyright advisor / team will need to clear it for online (and other) uses

- Is the content previously-unpublished literary material? If so, regardless of its age, it will still be in copyright

- Always aim to indicate the creator of the work where known. If you know the work is by an unknown individual, please mark it as "Unknown creator"

- Always aim to indicate the date of the work where known. If you don't know the date of the work, please estimate as closely as possible using standard indicators, e.g. "Circa 1950s"

- Always aim to indicate ALL the death dates (if applicable) of ALL creators for a particular piece. For jointly-created work, copyright expires 70 years after the last-surviving creator

- Are there copyright works reproduced within another work (e.g. an image of a sculpture within a photo of an artist in her studio, or music accompanying an interview)? If so, please provide as much copyright data about ALL relevant copyright works

- Are there any other types of IP depicted in the piece (e.g. a trade mark reproduced in a sketch book, or designer furniture shown in a photo)? If so, please provide as much IP data as you can (dates, designers' names, etc.)

- Make sure you clearly define which class of copyright work the piece belongs to (e.g. literary work, artistic work, film, etc.). The more detail, the better (e.g. letter, drawing, photograph, etc.), as this really helps the copyright team

- With letters, copyright belongs to the writer, not to the recipient. So please provide as much useful copyright data as you can about the writer

- Any bleed-throughs and alternative views will need to be highlighted so they get additional copyright clearance

Archives & Access integrated the archive cataloguing system CALM, with artwork cataloguing system TMS, our bespoke content management system CIS, and the digital asset management system iBase Manager.

This systems integration enabled migration of archive collections from CALM into TMS for copyright management, for linking to existing and new constituents – bringing the archive and art collections together – and migration of archive collections to CIS and iBase Manager to facilitate photography and subject indexing.

By migrating archive records to TMS, CIS and iBase Manager, archive collections are made available to the copyright assistant, photography staff and the subject indexer in order for them to undertake their work. The systems integration is fully automated and requires no manual intervention to migrate the data from source to destination system.

A summary of the work carried out by Tate's Information Systems staff is as follows:

The archive collections are extracted from CALM in the form of an XML file. The extract is controlled by collections being flagged as ‘digitisation ready’. Extract is automated to run weekly prior to TMS loading.

Rules Engine and TMS loader

Rules Engine and TMS loader is an application developed to verify the quality and consistency of archive data extracted from CALM, prior to loading into TMS. The rules engine prevents corruption of TMS and ensures archive data conforms to agreed standards. Only error free collections are migrated and stored in TMS. A report log is produced for Archive staff to review and correct errors. The rules engine and loader run as a scheduled automated job, weekly. New use of TMS was introduced to support storage of archive collections whilst ensuring the integrity of the art collection and the use of TMS to manage the art collection. New controlled vocabularies were created in TMS for both storage of archive collections and management of the copyright process, including recording creative commons permission.

TMS – CIS migration

The CIS database has been enhanced to include new tables and relationships to store archive collection data and related information (image and subject indexing). The existing data migration procedures and scripts used to transfer the art collection to CIS have been enhanced to include the migration of archive collections. Only collections ready for publication are migrated – based on a ‘ready for publication’ data attribute captured in TMS for each collection. Data is migrated weekly as part of the automated CIS refresh.

CIS – iBase Manager

The iBase Manager database has been enhanced to support the storage of archive collections and introduction of PREMIS for digital preservation – this work was specified by us and executed by iBase. CIS archive data was ‘pushed’ into iBase Manager weekly as an automated process and stored in new archive data structures. A new archive PES was also created by iBase to facilitate batch processing of images and use of HVDA storage

Subject Indexing Extract/load script

New scripts were developed to extract subject indexing data related to archive items from iBase Manager and to store data in CIS linked to corresponding archive items.

Image Loader

A new image loader was developed (to replace the previous loader), to create 4 surrogate images for the website from the 4-pack PNG image created by PES process, for both archive and art collections. Loader updates CIS with corresponding image records and sends surrogate images to external web server. This process is automated to run daily and includes updating image IPTC data – see below.

IPTC Stamper

The existing application to update IPTC data in images has been enhanced to include stamping of archive images with descriptive Meta data and copyright credits including creative commons. Images will be re-generated and re-stamped automatically if descriptive or copyright data changes.

Collection API

The existing collection API used to facilitate publication of Art & Artists has been extended to expose archive data stored in CIS. Our digital team use the enhanced API to develop the new Art&Artists&Archive web front-end

Miscellaneous scripts

Several SQL scripts and TMS reports have been developed to assist archive staff in their additional TMS cataloguing (e.g. to propagate constituent links to avoid excessive manual data entry), and to enable IS to check completeness of cataloguing and to correct errors where necessary.

Training and support

TMS support staff provide training and ongoing support to archive staff and copyright staff in order for them carry out TMS data entry. For each newly migrated collection, staff also create new constituents, run propagation and data consistency checking scripts, as required.

In order to image capture the selected archive pieces, our photography team used a Hasselblad H3D, a Nikon d810, a Kodak Creo flat scanner, an Atiz book scanner, and a lightbox.

In response to the selection criteria, materials were initially sorted by region and then by medium. To aid capture they were then broken down into following 5 categories:

- Loose 2D items A3 or smaller (includes photographs)

- Loose 2D items A3 or larger

- Notebook/sketchbook pages

- 3D objects

- Transparent material e.g. 35mm, 6x6cm and 5x4” film originals

photographing Loose 2D Flat ITEMS

Equipment used: Hasselblad H3D

Pre-capture

- Photographer requests a batch of material for digitisation. The material arrived with a spreadsheet which lists each piece in the batch

- Photographer records the delivery in a studio log-book

- Material is arranged in size order, and an appropriately sized storage case is sourced

- Camera is mounted to the copy stand and the tether cable attached

- Ensure the camera is level using a split level

- First piece is placed on the copy stand

- Photographer looks through the viewfinder and adjust work to camera - lowering / raising baseboard if necessary

- Photographer makes a marker with rulers - 1cm away from edge of work on the longest side

- Lights are set up at even space and height from the baseboard. Polyboards are used to screen light

- All over lights are turned off

- Ensure baseboard is level using a spirit level

- Light is measured using light meter. All four corners and the middle of the work should be even. If not, adjust lights until they are

- Begin capture

Post-capture

- Re-arrange archive material in the correct order and file away

- Pack camera, light meter, colour checker and any other items away

- Use a lint roller to clean the black velvet on the baseboard

- Turn off all equipment and leave area as you found it

Bound Volumes (notebooks / sketchbooks)

Equipment used: Atiz Book Scanner

Pre-capture

- Photographer requests a batch of material for digitisation

- Photographer records the delivery in a studio log-book

- Photographer washes hands thoroughly before handling material

- Review material to determine which arms are needed on the book scanner

- Maximum size for work is 16.5 x 24.2 inches. If material is A2 or less use blue arms/camera baseplate, if larger use red

- Ensure tether cables/batteries are connected securely to the computer and outlet / extension cables

- Clean the black velvet with a lint roller and make sure masking tape is on hand as the area is subject to dust

- Periodically it is a good idea to wipe any dust from Perspex - so have an anti static cloth at hand

- Turn off house lights and place book in the middle of the cradle

- Place magnets at either ends of the book to secure it

- Begin capture

Post-capture

- Use software to make adjustments / output files

- Edit the book in two parts - Left Page and Right page

- Once completed click done and repeat for the right hand side of the book

transparent material

Equipment used: Kodak Creo Scanner, and Nikon d810 and lightbox

Pre-capture

- Photographer requests a batch of material for digitisation. The material arrived with a spreadsheet which lists each piece in the batch

- Photographer records the delivery in a studio log-book

- Material is arranged in size order

- Material is then arranged in numerical order (this will make it easier when re-numbering)

- Clean using glass cleaner and anti static wipes before switching the scanner on

- Ensure you have the correct mount for the format of film being digitised

- Ensure the mount is clean before installing into the scanner

- Install film holder

- Put gloves on

- Review the material film - rocket blower is used to remove as much dust as possible, as necessary

- Film is loaded into holders - emulsion side down. If unsure of which side is emulsion - hold the film at 45 degree angle to overhead lights and move from side to side. Emulsion side will be duller and reflect less light - the reflective side is what you want to angle away from the scanner’s light

- Begin capture

Post-capture

- Access the scan in editing software

- Spot the dust if required

- Change the DPI from 2000 < 300

- Check levels and apply sharpening if required

- Save

Maintenance

- Keep the glass clean. Clean before every session

- Clean inside scanner monthly

transparent material

Equipment used: Nikon d810 and lightbox

Pre-Capture

- Photographer requests a batch of material for digitisation

- Photographer records the delivery in a studio log-book

- Material is arranged in size order

- Material is then arranged in numerical order (this will make it easier when re-numbering)

- DSLR is attached to the copy stand.

- Ensure camera is level using spirit level

- Ensure to Level the lightbox with a spirit level

- Light box and camera is turned on

- Ensure all over light that maybe conflict with lightbox / camera is turned off

- First negative is placed in mount on the lightbox

- Mask light with card as much as possible. Only light should shine through film

- Ensure the negatives fills the frame by looking through the viewfinder

- Mirror Up mode is used to reduce shake

- Use fluorescent white balance setting

- Use manual focus

- Use narrow depth of field - we used f16/f22

- Fire shutter released using the cable

- Be sure to have spare batteries to hand If shooting tethered

- Review the image in camera light meter if possible. If unsure - under exposure and just in Camera Raw - check

Post-capture

- If not shooting tethered, back up images and isolate most recent in a single folder

- First image is opened in Photoshop

- Create new action, name it and record: command + I > Create new adjustment layer > Black And White > Command + Shift + E (Flatten Image) > Save > Close image > Stop Action

(n.b. skip this step if Photoshop action already exists)

- To execute the action: File > Automate > Batch

- Source folder and destination are selected

- Compatibility is checked - make sure Windows, MAC OS and UNIX are selected

- Action is executed

This should invert and convert all images to black and white. Files are now ready to be cropped / adjusted in Camera Raw.

- Editing software is opened and all files that need cropping / adjusting are selected

- Open all images

- Select all and apply lens correction, cropping individually or selecting all and crop - making the small adjustments as you go

- Make level adjustments if needed

- Use grid to make sure images are straight

- Once images are cropped and levels adjusted - Export the HI RES file

- Save images

Redaction

Be sure to redact images as necessary. All batches must be checked for images that require redaction. Redaction could be for:

- Sensitive data such as personal information

- Images without copyright clearance

What is a subject index?

A subject index describes documents by index terms in order to indicate what the document is about or what the content refers to. For example, an archive photograph of a boat in a harbour may be indexed in terms of the subjects (any people in the boat), objects (boat and other objects represented), surroundings (places, environment) and other contextual information (society, interpersonal relationships, even metaphysical concepts).

At Tate, the subject index is applied to make images of, and information about, our collection even more accessible to the public. Our subject index is designed to provide flexible access points to that information, whether a user requires specific information, has an approximate idea of what s/he needs, or simply wants to browse.

The purpose of a subject index therefore relates to the users it is intended to serve.

All Tate collection works have an index applied - we use the Iconclass classification system. Since archive pieces published for Archives & Access were integrated with artworks on our website, this existing system was applied to the archive collections. The decision was then made to index the materials at item level (a 'piece' describes each page of a sketchbook individually, whereas an 'item' describes the sketchbook as a whole).

The approach to subject indexing was determined by Tate Archive in conversation with colleagues in our Information Systems and Digital departments. The work itself was completed by a dedicated member of staff (the Subject Indexer) who applied the index terms to each digitised archive item.

the subject indexing process

The photography team notified the Subject Indexer by email that a particular collection was uploaded onto iBase.

The Subject Indexer prepared an initial set of keywords that could be block-indexed across the collection, regardless of the individual item’s content. This both saved time and ensured that, for example, users interested in British Surrealism or the 7 to 5 Society will retrieve all items by Paul Nash or Barbara Hepworth regardless of the exact dates such artists were affiliated with these movements. We took this decision assuming that it was preferable to have too many keywords to wade through than too few, as the latter might lead to a suspicion of gaps and a resultant lack of trust in the system. These ‘primary’ terms are generally limited to: movement, nationality, work and occupation.

A typical example is the set of primary keywords generated for Donald Rodney (TGA 200321/3/1-48):

- Group/movement 20th century post-1945 Black – Arts Movement: BLK Art Group: conceptual art: Pan African Connection: pop art

- Emotions etc. universal concepts history

- People, diseases and conditions illness, sickle cell anaemia

- Society health and welfare, health, government and politics, political protest

- Lifestyle and culture cultural identity, emigration, immigration, social comment colonialism, race

- Nationality British, English, ethnicity black

- Work etc. arts and entertainment artist – non-specific

- Artist – multi-media

- Artist – painter

These keywords are generated with reference to a number of sources, usually:

- Materials present on www.tate.org.uk (such as captions, summaries, catalogue entries, previous indexing)

- Oxford Art Online

- Oxford Dictionary of National Biography

- Relevant guidebooks, histories and catalogues (e.g. The Tate Britain companion to British art; the catalogue Ben Nicholson, Winifred Nicholson : art and life)

The Subject Indexer did an initial check of the images on iBase to make sure they matched the archive descriptions. A spreadsheet of any errors was sent to the project delivery team.

The Subject Indexer began looking through the items and generated keywords specific to them. Because of the time restraints of the Archives & Access project, it was decided that a comprehensive index of each item with reference to every imagined researcher present and future was time-consuming and probably impossible. Particular subject areas were prioritised; ‘proper nouns’, indexing all people, places, and named artworks, while referencing more abstract or more ‘everyday’ concepts (e.g. emotions, parts of the body) as they seemed relevant, or as they matched other collections in the project. Keywords were selected from the iBase thesaurus that has been compiled since the original ‘Art and Artists’ indexing (from 2000 onwards), with new terms added when necessary.